NEP 2020 · 2026-04-25 · ~12 min read

The National Education Policy 2020 was published nearly six years ago. The accompanying UGC and AICTE frameworks for the four-year undergraduate programme, the multidisciplinary requirement, and the minor structure are now operationally live at most private universities. The AI minor, in particular, has gone from an interesting option to a near-default expectation among prospective students and their parents.

But "having an AI minor" is doing a lot of work in many university brochures. The actual minor on the ground often falls short of what NEP envisages, what students need, or what recruiters will recognise.

This piece is a practical guide for the people who actually have to make the AI minor work — Deans, programme heads, academic registrars, NEP implementation cells. It is written in the spirit of "how to do this well, given the constraints," not "how to comply on paper."

What NEP actually requires of an AI minor

The relevant NEP and FYUP framework provisions, paraphrased, set five non-negotiables.

- A minor must carry 18–24 credits, depending on the framework variant the university adopts.

- Minor courses must be demonstrably outside the major's home department's core.

- Multidisciplinary integration is not optional — it is the design intent of the FYUP.

- Assessment must be substantive and credit-bearing, on par with major courses.

- Credit must be transferable within the Academic Bank of Credits (ABC) framework.

A correctly designed AI minor must satisfy all five — not just the credit count. The credit count is the easy part; the other four are where most implementations come apart.

Where universities most commonly go wrong

From the AI minor structures we have seen across about forty private universities, four implementation patterns recur — and all four produce weak outcomes.

1. The "Python and a model" minor

Six courses, all coded as "Introduction to X," none of which require students to ship anything. Credits accumulate; capability does not. Recruiters see the transcript and ignore it.

2. The "borrowed CS courses" minor

The minor is composed of CSE department electives that already exist, retrofitted with the "AI minor" label. The CSE department is not staffed for non-CS students; the courses assume programming proficiency the minor's audience does not have; attrition is severe.

3. The "outsourced edtech wrapper" minor

A third-party content provider delivers most of the minor remotely, with university faculty acting as proctors. Quality is variable, assessment is automated, integration with the rest of the degree is nil.

4. The "vague AI literacy" minor

A semester of "AI ethics and society," a semester of generative AI demos, a project. Pleasant; not credentialable.

The trap most universities fall into is not laziness — it is sequencing. They declare the minor before they have the faculty to teach it, then patch the gap with whichever of the four patterns above is cheapest.

What a well-designed AI minor looks like

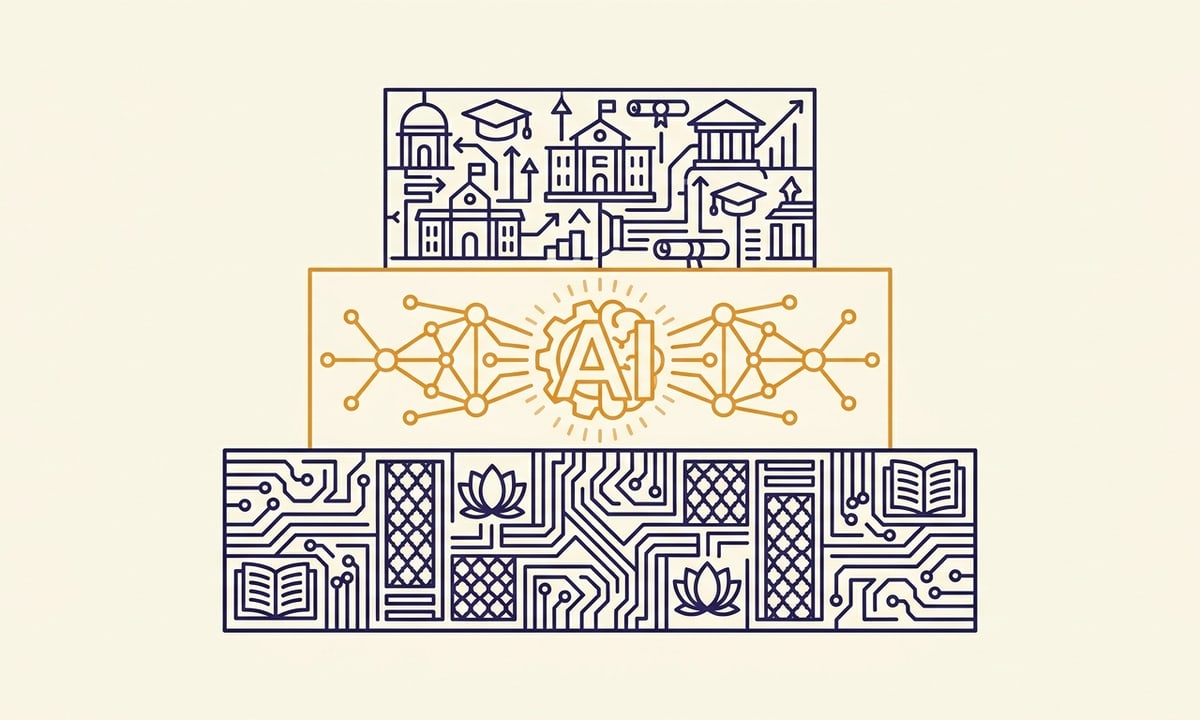

The version we recommend — and the version Kompas delivers under Track D — AI Literacy for All Disciplines — has three layers across roughly 24 credits.

Layer 1 — Universal foundation (8 credits)

A two-semester foundation course taken in common by all minor students regardless of major. It covers:

- How modern AI systems actually work, at a level of detail a serious non-CS undergraduate can hold

- The data lifecycle and how to reason about it

- Practical use of foundation models, including writing good prompts, building simple applications, and evaluating outputs

- Bias, safety, and the limits of AI

Notably, this layer does not teach Python from scratch. Students use AI tools through code-aware interfaces and study the underlying models conceptually. Programming-track students who want depth go to Track A instead.

Layer 2 — Discipline module (8–12 credits)

A two- or three-course sequence specific to the student's major:

The discipline modules are where the minor stops being generic and starts producing real, recruiter-recognisable capability. They are also where most badly designed minors collapse, because building twelve discipline-specific AI modules at quality is genuinely hard — and not something a CS department can do alone.

Layer 3 — Career bridge (4 credits)

A one-semester capstone that integrates Layer 1 and Layer 2 into a portfolio-grade project. Assessed by a panel including at least one external industry assessor.

This is what differentiates a credentialable AI minor from a paper one. Without it, the rest of the structure is academic theatre.

Faculty load is the real bottleneck

The constraint that limits AI minor quality on most campuses is not money. It is faculty supply.

A 24-credit minor across, say, eight discipline tracks at a mid-size university with 4,000 minor enrolments needs roughly 12–18 specialised AI-fluent instructors who can teach to non-CS audiences. Most universities have two or three.

The shortage compounds: existing CS faculty are pulled into the minor, lose research time, leave for industry, and the cycle accelerates.

Track E — Faculty & Research Enablement exists specifically to address this constraint. It is a 12-month pathway for existing university faculty (from any department) to develop the toolchain fluency and pedagogical patterns to teach the AI minor. We treat faculty enablement as the precondition for everything else, not an afterthought.

Assessment is the credentialing problem

The assessment problem under NEP for an AI minor is harder than for most subjects. Two reasons:

- Code is not effort. A student who writes a 500-line ML model with heavy AI assistance is not necessarily more capable than one who wrote 50 lines unaided. Traditional grading rubrics under-detect this.

- Cohort sizes scale awkwardly. A 200-student minor cohort is too large for thoughtful project assessment by a single instructor and too small to justify automated grading.

The one rule that actually matters: grade the demonstrated capability, not the line count. Everything else in the rubric follows from that.

Our Skill Score rubric addresses both — it grades on demonstrated capability rather than line count, and is structured for panel review at scale. It is published openly. Universities are welcome to use it directly or adapt it.

A two-year roadmap to a working minor

For a programme office starting from scratch, the practical roadmap looks like this.

- Months 1–3 — Diagnose. Map the existing AI-relevant courses, the faculty available, and the minor demand by major. Decide which 4–6 discipline modules to launch with — not all twelve at once.

- Months 4–6 — Build Layer 1. The universal foundation course is the riskiest piece because it is taken by everyone and sets the tone. Build it well.

- Months 7–9 — Build Layer 2. Build modules in the chosen disciplines. Bring in or train discipline-specialist faculty.

- Months 10–12 — Pilot. Run with one cohort, one or two majors, for a full semester. Gather evidence.

- Months 13–18 — Scale. Move to all majors with the prepared modules. Add new discipline modules in subsequent academic years.

This is roughly a two-year journey from standing start to a working AI minor at scale. Faster is possible if you partner — both for curriculum and for faculty.

Where Kompas fits in this picture

We deliver Layer 1, Layer 2, and Layer 3 as a fully integrated Track D on partner campuses, with resident faculty and the Skill Score baked in. The full structure, illustrative roadmap, and engagement model is on our for-VCs-and-deans page.

If the minor is something your university wants to do well rather than just declare, the conversation is worth having.